DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

5 (430) In stock

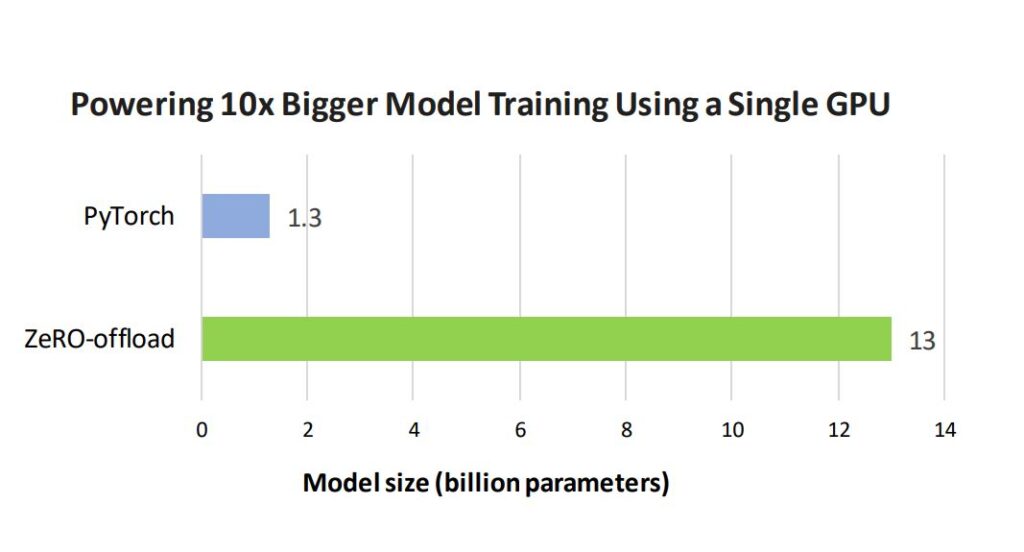

Last month, the DeepSpeed Team announced ZeRO-Infinity, a step forward in training models with tens of trillions of parameters. In addition to creating optimizations for scale, our team strives to introduce features that also improve speed, cost, and usability. As the DeepSpeed optimization library evolves, we are listening to the growing DeepSpeed community to learn […]

N] Improvement on model's inference from DeepSpeed team. [D] How is Jax compared? : r/MachineLearning

9 libraries for parallel & distributed training/inference of deep learning models, by ML Blogger

DeepSpeed: Extreme-scale model training for everyone - Microsoft Research

Shaden Smith op LinkedIn: DeepSpeed: Accelerating large-scale

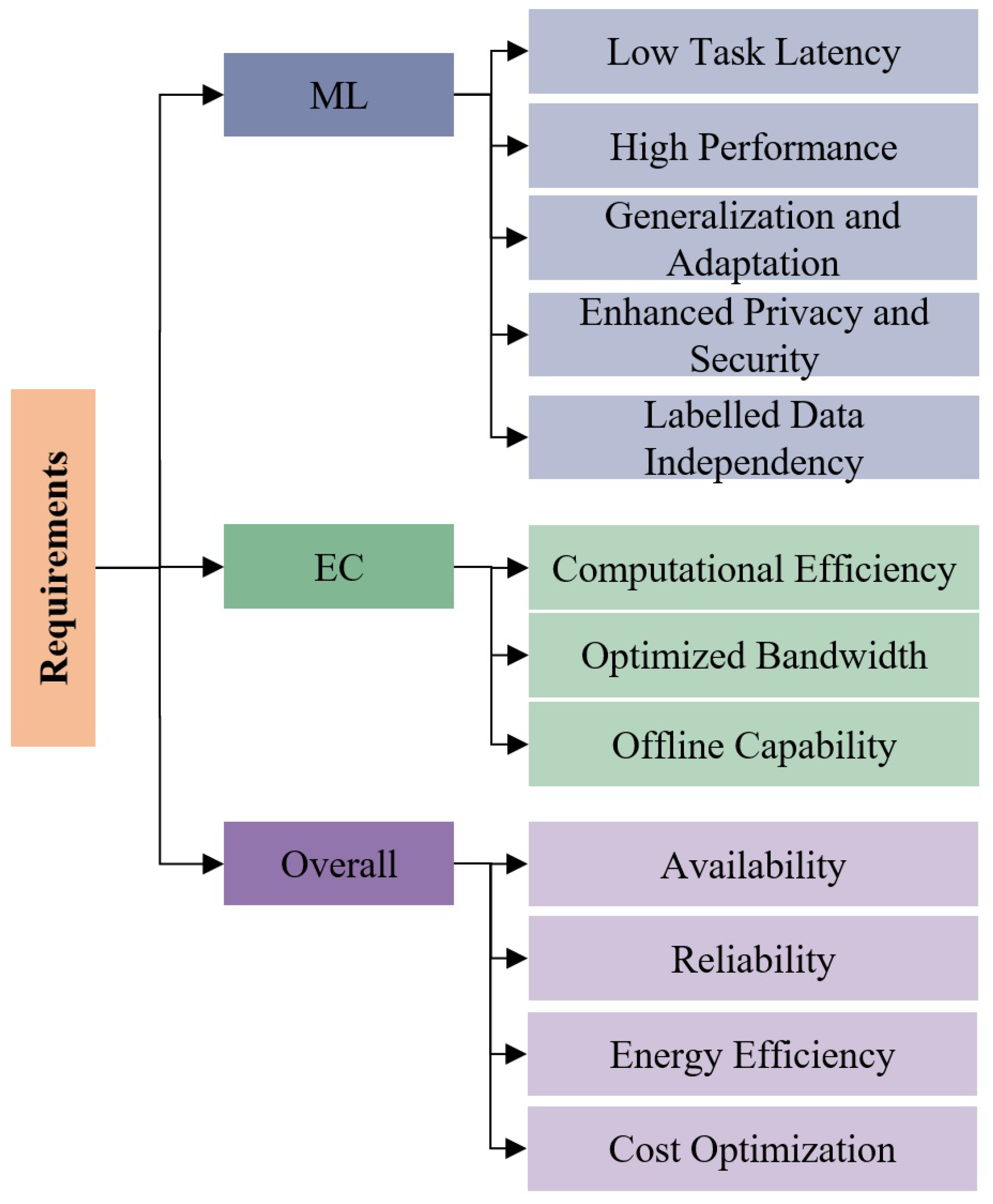

AI, Free Full-Text

Aman's AI Journal • Papers List

DeepSpeed: Extreme-scale model training for everyone - Microsoft Research

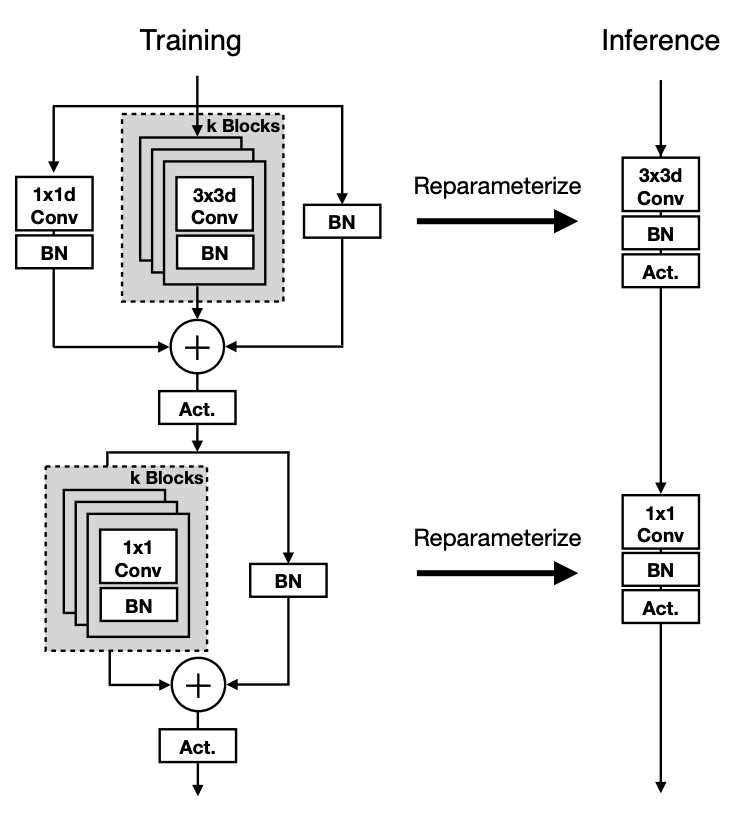

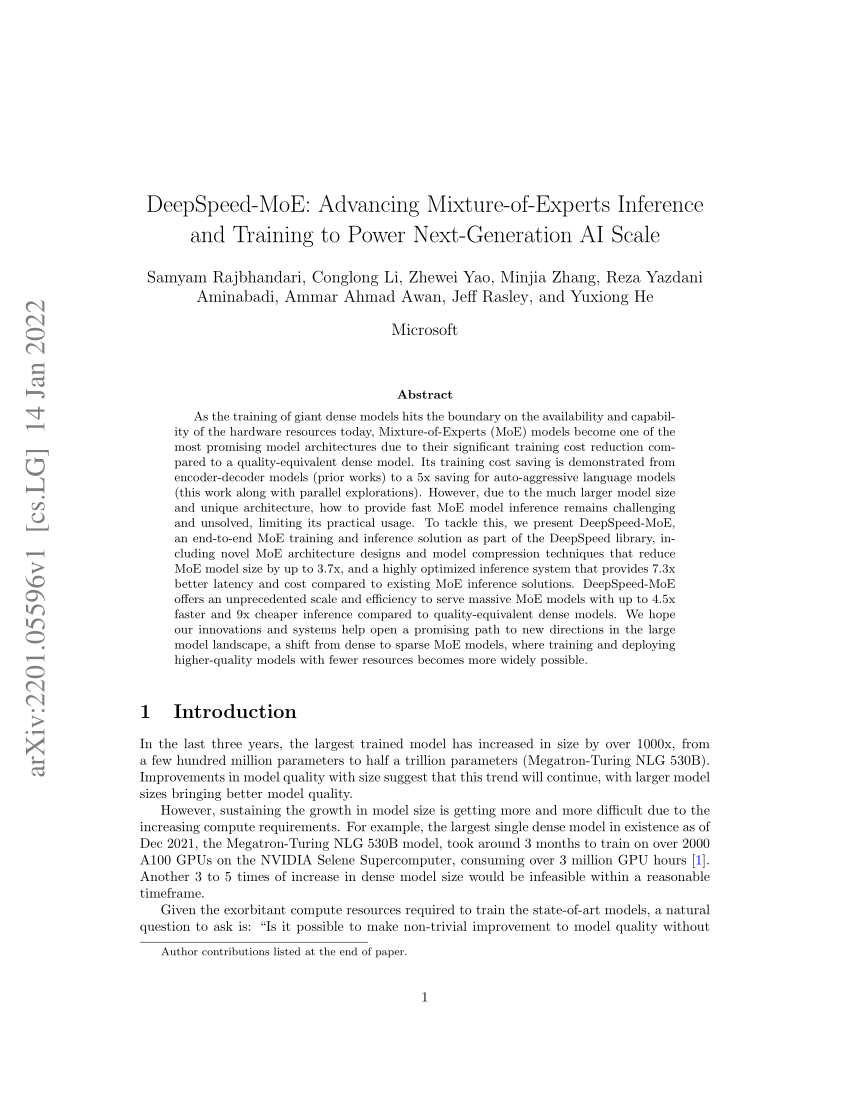

PDF) DeepSpeed-MoE: Advancing Mixture-of-Experts Inference and Training to Power Next-Generation AI Scale

Ecosystem Day 2021

Seamless Leggings for Fitness Push Up Gym Leggings Women Yoga Pants 2023 New Leggins Mujer Legging Sport Femme Beige Pink Khaki - AliExpress

Seamless Leggings for Fitness Push Up Gym Leggings Women Yoga Pants 2023 New Leggins Mujer Legging Sport Femme Beige Pink Khaki - AliExpress Pokémon FireRed and LeafGreen Versions - Bulbapedia, the community-driven Pokémon encyclopedia

Pokémon FireRed and LeafGreen Versions - Bulbapedia, the community-driven Pokémon encyclopedia Barbie On The Go Motorized Carnival Playset

Barbie On The Go Motorized Carnival Playset Silicone Scar Gel – Belly Bands

Silicone Scar Gel – Belly Bands- Negative® Sieve Thong

icyzone Workout Tank Tops Built in Bra - Women's Strappy Athletic Yoga Tops, Exe

icyzone Workout Tank Tops Built in Bra - Women's Strappy Athletic Yoga Tops, Exe